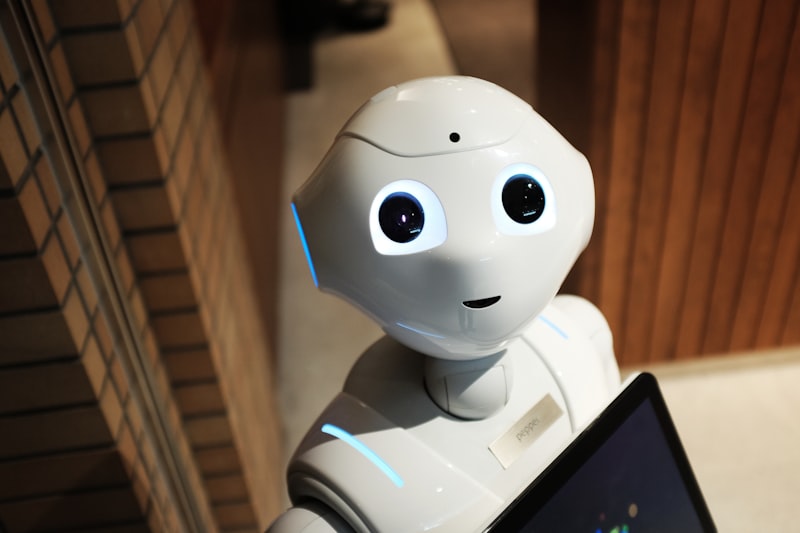

This Robot Taught Itself to Talk by Staring at Its Own Reflection

Columbia Engineering researchers built a robot that learned realistic lip movements by watching itself in a mirror and studying human videos online. No explicit programming — it figured out how to speak and sing with synchronized facial motion entirely on its own.

Mirror, Mirror

A robot at Columbia Engineering just did something that should make you pause. It learned to talk — not by being programmed, not by being trained on labeled datasets, but by watching its own reflection.

The researchers gave the robot a mirror. They gave it access to human videos online. And then they let it figure out how mouths work. No explicit instructions about lip positions. No hand-coded phoneme maps. Just observation, experimentation, and self-correction.

The result? A robot that can speak and sing with synchronized facial motion that's eerily close to natural human lip movements. And it learned it the same way a baby might — by watching, trying, and watching again.

Why This Is Different

We've had robots that can move their lips before. But they've always been programmed — engineers manually mapping phonemes to motor positions, creating lookup tables, fine-tuning timing by hand. It's tedious, brittle, and it never quite looks right. The "uncanny valley" of robotic speech has persisted for decades.

What Columbia's team did was throw away the manual approach entirely. Instead of telling the robot how to move its face, they gave it a self-supervised learning loop:

- Watch human videos to understand what natural lip movements look like

- Watch itself in a mirror to understand its own face and motor capabilities

- Try to reproduce what it saw, observe the result, and adjust

This is the same fundamental process by which humans learn to speak. Babies spend months watching faces before they start babbling, and they use auditory and visual feedback to refine their own mouth movements over time. This robot is doing a compressed version of that same journey.

The Implications Go Way Beyond Lip-Sync

Let's be honest: a robot that can sync its lips to speech is cool, but it's not going to change the world by itself. What matters is the method.

If a robot can learn something as physically nuanced as facial motion through self-observation and unstructured online video, what else can it learn? Gestures? Posture? The subtle body language cues that make human-robot interaction feel natural instead of creepy?

The Columbia team's approach suggests a future where robots don't need to be explicitly programmed for every physical behavior. Instead, they learn by doing — by trying things, observing the results, and getting better over time. It's the difference between building a robot and raising one.

The Mirror Test, Revisited

There's a poetic irony here. The "mirror test" has long been used in animal cognition research to determine whether a creature has self-awareness — can it recognize itself in a mirror? Dolphins, great apes, and elephants pass. Most robots don't even get asked.

This robot isn't passing the mirror test in the consciousness sense. It doesn't "know" it's looking at itself. But it's using its reflection as a learning tool — which, in some ways, is more interesting. It doesn't need self-awareness to use self-observation productively.

We've spent decades asking whether machines can think. Maybe the more interesting question is whether machines can practice.

This one just answered yes.

0 Comments

No comments yet. Be the first to say something.